CHI 2026: Papers and Presentations on Accessibility

March 23, 2026

Accessibility-related papers, presentations, and workshops from CREATE researchers at CHI 2026, the ACM CHI conference on Human Factors in Computing Systems. We appreciate your patience as we continue to update this page.

Congratulations to CREATE associate director Jon E. Froehlich, who will be honored with a Societal Impact Award at the 2026 SIGCHI Conference in Barcelona!

Papers

Ability heuristics for conducting accessibility inspections

Claire Mitchell, Judy Kong (Ph.D. student), Jesse Martinez (Ph.D. student), Sean Kane (Ph.D. graduate and CREATE advisory council member), Amy Ko (faculty member), Alexis Hiniker, and Jacob O. Wobbrock (CREATE associate director)

Artists on a Decade of AI Evolution: An Interview Study of Affordances, Culture, and Artistic Practice with Machine Learning

Téo Sanchez, Mariya Dzhimova, Stacy Hsueh (former CREATE postdoctoral researcher), Sarah Fdili Alaoui, Vaynee Sungeelee, and Baptiste Caramiaux

GeoVisA11y: An AI-based Geovisualization Question-Answering System for Screen-Reader Users

Chu Li (Ph.D. student), Rock Yuren Pang (Ph.D. student ), Arnavi Chheda-Kothary (Ph.D. student), Ather Sharif (CREATE Ph.D. graduate), Henok Assalif, Jeffrey Heer.

Best Paper

“I Don't Trust it, but I Use it”: Navigating Trust, Privacy, and Identity in Disabled People’s Use of Generative AI

Jazette Johnson (postdoctoral researcher), Aaleyah Lewis (Ph.D. student), Jennifer Mankoff (Director of CREATE), Olivia Banner (CREATE Director of Strategy and Operations)

Interface Support for Evaluating Disability Bias in AI Generated Images

Kelly Avery Mack (Ph.D. graduate), Lucy Jiang (Ph.D. student), Lotus Zhang (Ph.D. student), Leah Findlater (CREATE associate director)

Like, Comment & Caption: A Decade of Social Media Video Caption Research (2015–2025)

Huong Nguyen, Emma J McDonnell (Ph.D. graduate), Lloyd May, Alexander Druzenko, Zoobia Saifullah Syeda, Mark Cartwright, Sooyeon Lee

Honorable Mention

Nonvisual Support for Understanding and Reasoning about Data Structures

Brianna L Wimer, Ritesh Kanchi, Kaija Frierson, Venkatesh Potluri (Ph.D. graduate), Ronald Metoyer, Jennifer Mankoff (Director of CREATE), Miya Natsuhara, and Matt Wang

TaskAudit: Detecting functiona11ity errors in mobile apps via agentic task execution

Mingyuan Zhong (Ph.D. student), Chen, X., Kyi, D.W., Li, C., James Fogarty (CREATE associate director), and Jacob O. Wobbrock (CREATE associate director)

Posters

Access to Interpretation: How Formal Cues Ground Interpretive Alt Text for Paintings

Vera L. Zhong, Lucy Jiang (Ph.D. student), Kathryn E. Ringland

AutismCarebot: Emotion-First, Source-Aware Conversational Support for Autistic Users

Shristi Srivastava, Annuska Zolyomi (CREATE associate director), Dong Si

Kane, Jayant, Wobbrock, Ladner: 2025 SIGACCESS ASSETS Paper Impact Award

March 6, 2026

A paper on mobile device accessibility and co-authored by CREATE leaders was honored with the 10-Year Lasting Impact Award at the International ACM SIGACCESS Conference on Computers and Accessibility (ASSETS ’25).

The 2009 ACM ASSETS paper, Freedom to roam: A study of mobile device adoption and accessibility for people with visual and motor disabilities, found that people with disabilities had to find ways to adapt to inaccessible technology. The team developed guidelines for more accessible and empowering design.

The lasting impact award recognizes research papers “that [have] had a significant impact on computing and information technology that addresses the needs of persons with disabilities.”

From left to right: Jacob O. Wobbrock, Shaun K. Kane, award presenter, Richard Ladner, and Chandrika Jayant The authors are:

- Shaun K. Kane, CREATE Ph.D. graduate and Advisory Board member

- Chandrika Jayant, former Ph.D. student of Richard Ladner

- Jacob O. Wobbrock, CREATE associate director and former CREATE co-director

- Richard Ladner, CREATE's inaugural Director for Education, Emeritus and a continuing CREATE faculty member

Read more about the award:

- The 2025 SIGACCESS ASSETS Paper Impact Award, AccessComputing

- Professor, Ph.D. alum honored for smartphone accessibility research, UW iSchool

Richard Ladner: SIGCSE Outstanding Contribution to CS Education Award

March 5, 2026

Richard Ladner, CREATE's Founding Director for Education Emeritus and a continuing CREATE faculty member, received the 2026 Outstanding Contribution to Computer Science Education Award at the ACM SIGCSE Conference.

The award cited Ladner’s contributions to accessible computer science education at the K-12, college, and graduate levels and was given “in recognition of long-lasting efforts to develop new technologies and activities to engage people, particularly children, in creative learning experiences based on computational literacy for discovery and expression.”

Richard Ladner, CREATE founding Director for Education Emeritus and Professor Emeritus, Allen School of Computer Science & Engineering

Ladner’s work has had a profound impact on the accessibility of CS education, the inclusion of accessibility in the computing curriculum, and the inclusion of disability in broadening participation activities.

The ACM SIGCSE Award for Outstanding Contribution to Computer Science Education honors researchers who have made a long-lasting impact and significant contribution to computing education.

Through mentoring and advocacy, Ladner has directly helped hundreds of students with disabilities to gain exposure to computing as a potential career path. A handful of examples:

- AccessComputing's principal investigator and founder. AccessComputing has supported over 1,500 high school, undergraduate, and graduate students in building skills and connections with mentors and professional opportunities in computing-related fields.

- Summer Academy for Advancing Deaf and Hard of Hearing in Computing, co-founder. The summer academy helped students jump start their academic careers by spending the summer taking computing courses at the UW’s Seattle campus. Three of those students became computing faculty themselves.

- AccessCSforAll's Founding PI and continuing advisor. AccessCSforAll provides accessible computer science curriculum and other resources available to K–12 students with disabilities. Their paper describing the work received the SIGCSE 2019 Best Paper Award.

- “Teaching Accessibility” co-editor with Alannah Oleson and CREATE faculty member Amy Ko. The online book provides computing instructors with tools and resources to help them incorporate accessibility into their teaching.

- More papers: The importance of teaching and learning about accessibility, and Outlining strategies for integrating accessibility into various computer science courses.

This article was excerpted from the Allen School article about Ladner's career at UW as a professor in computer science & engineering.

Jon E. Froehlich Honored with SIGCHI Societal Impact Award

March 3, 2026

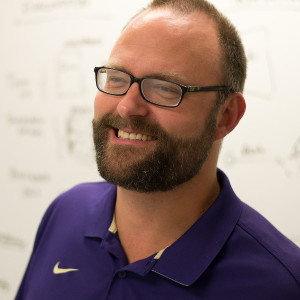

CREATE associate director Jon E. Froehlich received a Societal Impact Award at the 2026 SIGCHI Conference for addressing accessibility challenges through HCI and AI.

Froehlich, a professor of computer science and engineering in the Allen School, directs the UW Makeability Lab, utilizing human-computer interaction (HCI) and machine learning to tackle high-impact socially relevant problems. His work has led to improved city planning and sidewalk infrastructure across the globe, and he has developed technologies that have enabled blind and low-vision users to prepare meals, participate in sports and even engage with children’s artwork.

His work on accessible maps (CHI 2019 Best Paper award, UIST 2025) included the first-ever screen reader for Google Street View and real-time sound recognition for deaf and hard-of-hearing users (CHI 2020, CHI 2022, CACM 2022); it has directly impacted product teams at Google and Microsoft. This combination of civic impact, policy influence, and industry translation is rare in HCI; that Froehlich has sustained it across a decade of work is exceptional.

As fellow CREATE associate director Jacob O. Wobbrock noted, "His research in urban accessibility has achieved what few HCI researchers ever accomplish: direct, measurable change on a global scale. Specifically, Froehlich's work has affected how cities invest in pedestrian infrastructure, how communities and governments in 40+ cities plan for accessibility, and how federal agencies define walkability and accessibility data standards. Froehlich's societal impact has been measurable and huge!"

Jon E. Froehlich, CREATE Associate Director

- Professor, Allen School of Computer Science & Engineering

- Director, Makeability Lab

- Associate Director of Tech Transfer and Outreach of PacTrans

- Co-founder of Project Sidewalk

- Core Faculty, Urban Design and Planning Interdisciplinary Ph.D. program

“This recognition belongs to my incredible students and collaborators in the Makeability Lab who work tirelessly to design more accessible, equitable futures and pursue research in accessibility, education, and environmental sustainability.”

- Jon E. Froehlich, in Allen School news

About the award

SIGCHI, the ACM Special Interest Group on Computer-Human Interaction, recognizes mid-career to senior researchers whose HCI work demonstrates social benefit.

“This recognition belongs to my incredible students and collaborators in the Makeability Lab who work tirelessly to design more accessible, equitable futures and pursue research in accessibility, education, and environmental sustainability.”

- Jon E. Froehlich, in Allen School news

Project Sidewalk - real and lasting impact

One project developed in Froehlich's Makeability Lab, Project Sidewalk, has been used by U.S. cities to help fund initiatives to improve the safety and accessibility of their pedestrian infrastructure. In Newberg, Oregon, Project Sidewalk collected more than 17,000 labels and showed that sidewalks were inaccessible especially around voting centers and bus stops, prompting the city council to authorize $50,000 for immediate sidewalk repairs and establish a grant program to help homeowners fix their own sidewalks. After Mendota, Illinois, experienced devastating fires in 2022, community partners used Project Sidewalk data to secure a $3.6 million Illinois Transportation Enhancement Program grant to rebuild their sidewalks.

Past CREATE recipients

Froehlich joins CREATE leaders who have received the SIGCHI Societal Impact Award (formerly "Social Impact Award"):

- Jennifer Mankoff, CREATE Director, in 2022

- Juan Gilbert, former CREATE Advisory Board member, in 2021

- Jacob O. Wobbrock, CREATE associate director, in 2017

- Jonathan Lazar, CREATE Advisory Board member, in 2016

- Richard Ladner, CREATE's Director of Education Emeritus, in 2014

Adaptive Solutions Mini-Hackathon: 2026 Recap

February 26, 2026

In January, CREATE and HuskyADAPT teamed up with the King County Library System (KCLS) to host a mini-hackathon to brainstorm and prototype solutions to accessibility problems.

After an introduction to five real-world requests submitted by the disability community, participants self-selected their projects and got to work building their prototypes. These diverse teams of makers, researchers, disability professionals, and volunteers were assisted by community co-designers and design leads.

They worked in KCLS's Bellevue Makerspace using moldable thermoplastic, cardboard, glue, tape, fabric, sewing machines, etc.

Participant feedback

"I genuinely had an enjoyable time during the event; it was a new and meaningful experience for me. I was a little confused at first, but my teammates and the people I connected with were very encouraging, which made the experience comfortable and engaging. I learned a lot throughout the day and really appreciated the opportunity for this collaborative event."

"I truly appreciated the energy, creativity, and strong community engagement in the room. It was inspiring to see so many families and learners exploring hands-on innovation together."

Projects at the 2026 event

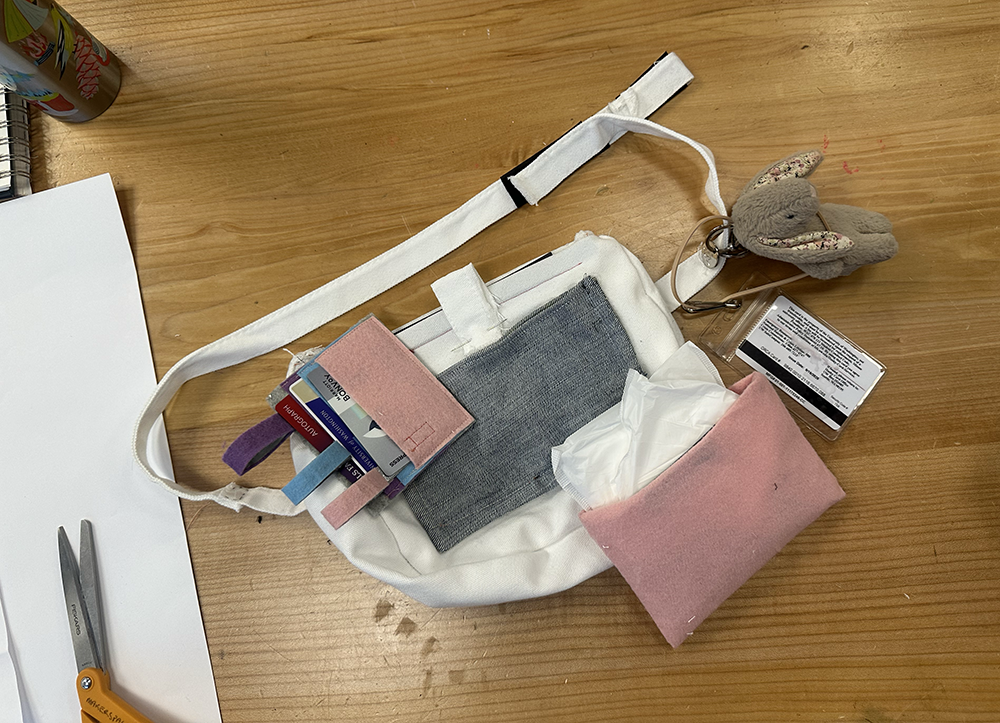

Accessible fanny pack

Goal: Design an easily organized fanny pack for a student with developmental disabilities who struggles with zippers, jumbled contents, and grasping specific items. Contexts include using the pack one-handed while communicating, purchasing, riding the bus, and other daily activities.

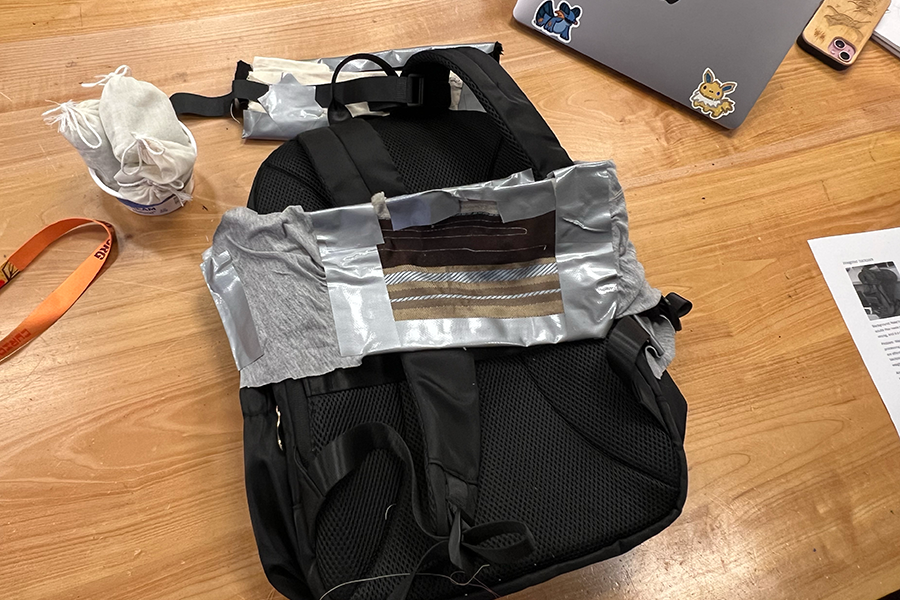

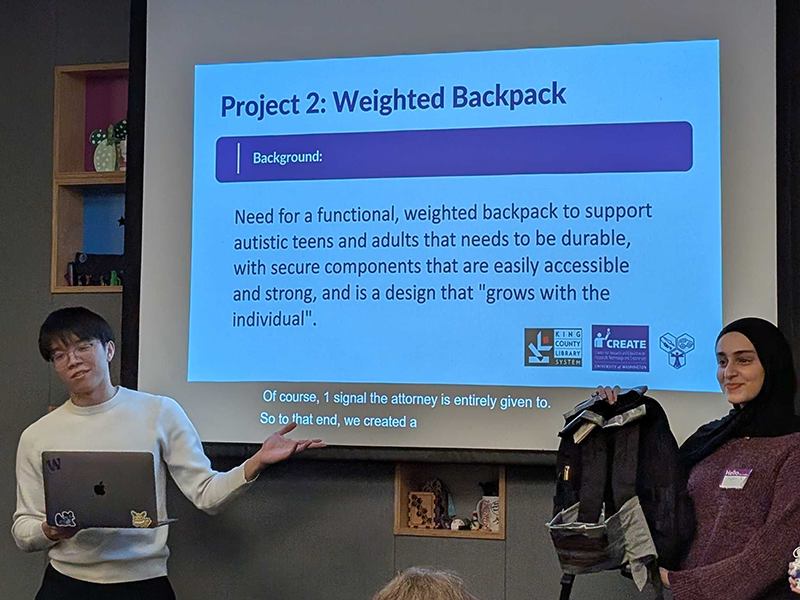

Weighted backpack

Goal: Adapt a weighted backpack for autistic teens and adults to support sensory processing differences. Challenges included design for durability, affordability, and visual aesthetics, with components that are easily accessible. Should grow with the the individual.

Easy-to-grasp hair clip

Goal: Create a universal design device to add usability to butterfly/claw-style hairclip handles to make grasping and using easier. A significant challenge was to design an adaptation that would work with a wide variety of hairclip designs. Members' lived experience with arthritis informed the team's work.

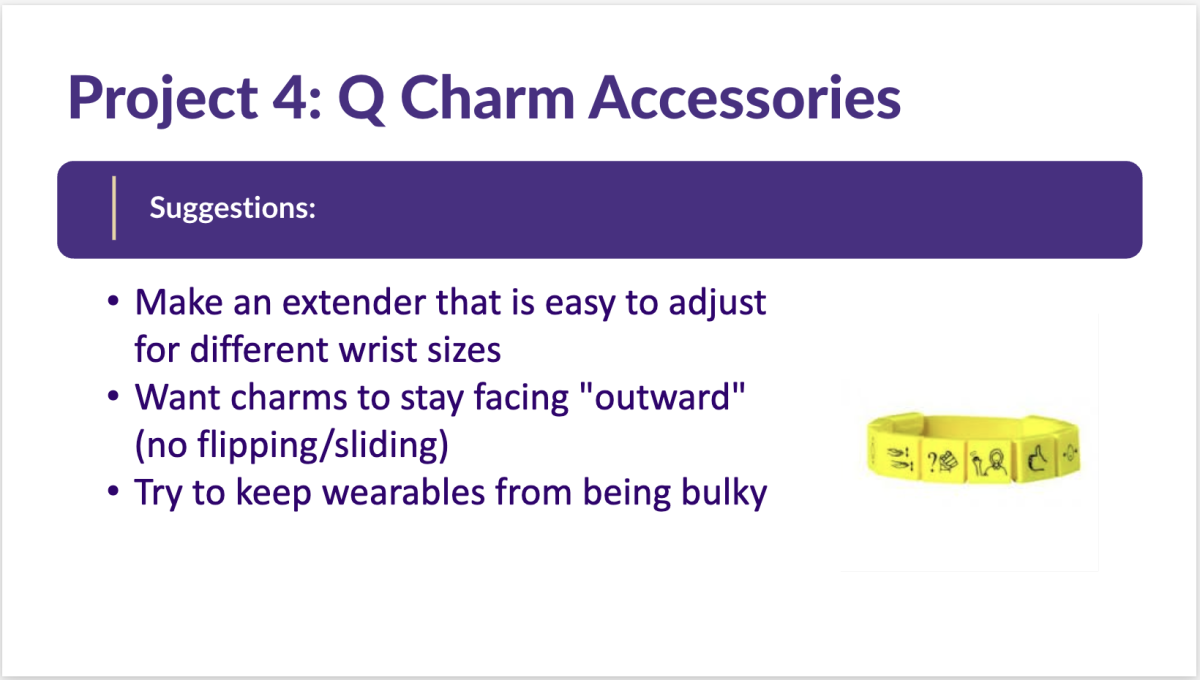

Q Charms accessories

Q charms are jewelry-based tokens for Augmentative and Alternative Communication (AAC) icons. Goal: Using 3D-printed tokens that fit on a silicon wristband or bracelet, design an easily adjustable bracelet extender and also a lanyard that can have Q charms attached and detached. Challenges include avoiding bulk and keeping charms from spinning to to the inside.

Customized Q Charms

Goal: Create an accessible, open source software pipeline for creating digital custom Q charm designs in CAD and design a Q charm that users can customize with any image of their choice on the spot.

Acknowledgements

We thank KCLS for the use of their Bellevue makerspace and HuskyADAPT students for their skillful and enthusiastic organization of the event.

Hackathon organizers

Annika Pfister

Hackathon co-host and HuskyADAPT Outreach Chair; 3rd-year Ph.D. student in Electrical & Computer Engineering

Tanvi Bachu

Hackathon co-host and HuskyADAPT Outreach Chair; 3rd-year undergraduate student in Electrical and Computer Engineering

Megan Willan

Adult & Maker Services Librarian at King County Library System

Project design and feedback panelists

- Brennan Johnston, Assistive Technology Support Technician for Washington Assistive Technology Act Program (WATAP)

- Dr. Gaurav Chaudhari, software engineer at Google

- Kate Glazko – UW CREATE Ph.D. student and student representative; 3rd-year Ph.D. student and NSF grad student fellow at the Paul G. Allen School for Computer Science and Engineering

- Megan Willan, Adult & Maker Services Librarian at King County Library System

- Sarah Lemke, MOT, OTR/L, a licensed and board-certified occupational therapist at the University of Washington Autism Center

Hackfest project for children with cerebral palsy goes on to win design awards

January 29, 2026

Internationally recognized research to address a real-world need got its start at a CREATE hackfest.

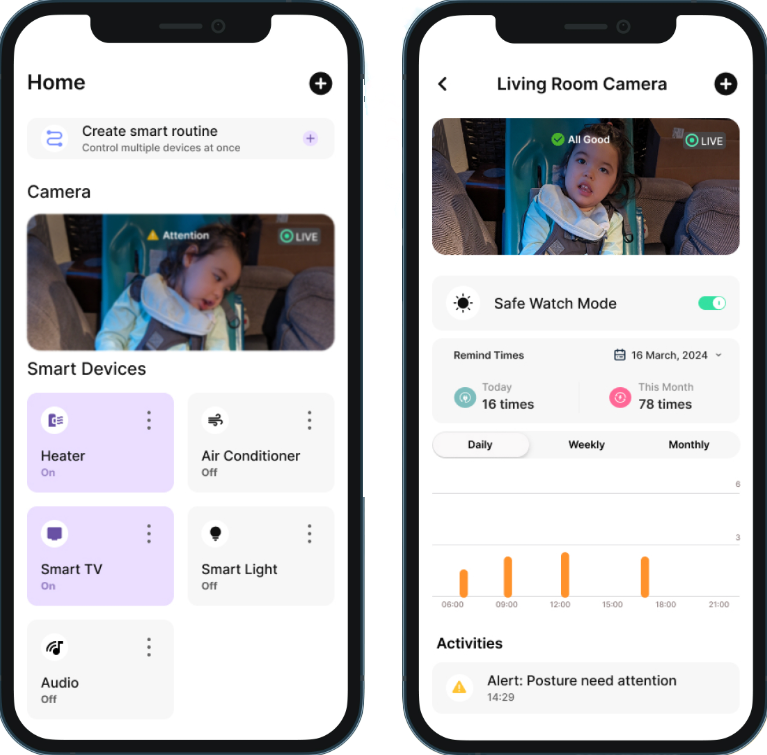

In 2024, students Lige Yang and Richard Li teamed up at the CREATE AI+Accessibility Hackfest to explore the concept of an AI tool that could monitor a person’s seated position, identify when they are in a posture that could cause injury or worsen an existing condition, and alert a caretaker with accurate, recommended corrections. Yang has continued developing the design.

From hackfest idea submitted by a parent...

The project idea was submitted by Max Smoot, the parent of a two year-old child with severe motor delays due to cerebral palsy. Smoot, who participated in the project development, described the need for a tool that exceeds what can be supported by current medical equipment and assistive technology.

The scenario: Constant vigilance and accurate intervention

- A growing child’s physical development can outpace their core and spinal muscle control, making it difficult to maintain a safe, stable seated posture.

- Poor positioning can make breathing difficult, can escalate to vomiting or aspiration, and can contribute to strain over time.

- Caregivers, often balancing many responsibilities, must monitor posture closely and intervene quickly to reposition the child.

Lige Yang, then a master's degree student in Human Centered Design and Engineering, led design. Yang is now a product designer specializing in AI-powered UX, accessible health technology, and human-centered systems.

Richard Li is a Ph.D. student at the Paul G. Allen School of Computer Science & Engineering, contributed to the sensing, AI model explorations, and the technical concept. His work focuses on applying sensing and machine learning to novel interactions, accessibility, and health.

Max Smoot, a systems engineer in the Puget Sound area, was the primary developer responsible for system implementation, led the testing and validation effort, and collaborated on key aspects of the system architecture.

The team won second place at the hackfest by demonstrating technical feasibility, including using computer vision models to detect poor posture, formulate intervention recommendations, and send push alerts to caregivers.

... To award-winning design

After the hackathon, Yang joined the Neotic company, where she led project direction on Veya, focusing on the smarthome wellness feature. She conducted usability tests and optimized the user experience. She engaged with industry experts at AI and health experience design events and was able to expanded the product's scope.

Two design events led to highly competitive professional milestones and awards for Veya and Yang: the MUSE Design Award and the International Design Awards Bronze Award. Recently, Yang presented the design at the International Design Awards in Thailand.

International Design Awards Bronze Prize

Company: Neotic

Lead Designers: Lige Yang

Project Location: Seattle

Prize: Bronze in Family & Children / Children's Health and Wellness Products

Entry Description: Unlike wearable monitors that are uncomfortable, unsuitable for special chairs, and built for healthy bodies, Veya is designed for children with cerebral palsy. Its calm, accessible interface reduces stress in urgent moments, reassuring families while easing the burden of constant caregiving.

Currently, Yang is dedicated to establishing accessibility-first design principles, driving AI design advancements in the accessibility field.

Inspiration from the hackathon and advisors

Yang shared her thanks to the hackfest organizers (Kate Glazko, Jerry Cao, Venkatesh Potluri, Tony Fast, and Kathleen Voss) and community for the platform that brought attention to real-world accessibility needs and sparked her interest in designing and developing a solution.

“The hackathon revealed the vast, unspoken accessibility needs in the real world, sparked my passion as a designer to use technology to shine a light on these unheard communities,” says Yang.

- Lige Yang, MS 2024, Human Centered Design and Engineering

She also credits CREATE Director Jennifer Mankoff for Mankoff's advice that she talk with different specialists. Yang followed the advice to meet with CREATE Advisory Board member Sean Kane and Brennen Johnston, Assistive Technology Support Technician at WATAP, a CREATE community partner. Both provided excellent advice centered on making sure the AI doesn't mislead users, considering that caregivers, who are already facing stress, need a very shallow learning curve. And, of course, the system must be designed with accessibility in mind from the start.

Hala Annabi to lead new UW Institute for Neurodiversity and Employment

October 21, 2025

CREATE congratulates faculty member Dr. Hala Annabi on her new role as the Founding Director of the new UW Institute for Neurodiversity and Employment.

The institute will bring together an interdisciplinary team of leading scholars, practitioners, and employers. Together they will work to build the capacity of the UW, Washington state, and the nation to create neuroinclusive employment opportunities and advance the career possibilities for neurodivergent people.

Annabi is a leading scholar on neurodiversity and employment and is an associate professor in the Information School. Her work in this space includes the publication of a series of Neurodiversity @ Work Playbooks that make a case for hiring neurodivergent people and offer concrete instructions for supporting their growth and career development.

Removable barriers to employment for neurodivergent adults

Studies suggest that up to 20% of the U.S. population is neurodivergent. Neurodivergent adults — such as those on the autism spectrum, or with attention deficit hyperactivity disorder (ADHD), dyslexia, dyspraxia, dyscalculia, or other cognitive differences — experience significant barriers to inclusion in education and employment due to disabilities that often aren’t obvious.

"The lower education and employment outcomes are largely attributed to education and workplace environments that were designed to reinforce normative expectations.”

Hala Annabi, CREATE faculty and founding director, Institute for Neurodiversity and Employment

Research shows that only 25% of autistic adults remain consistently employed over time, and just 67% of adults with ADHD are employed, compared to 87% employment among adults without ADHD. Accordingly, efforts to improve the neuroinclusivity of academic institutions and workplaces have significant potential for impact on individuals, families and the U.S. economy.

“The lower education and employment outcomes are largely attributed to education and workplace environments that were designed to reinforce normative expectations,” said Annabi. “When learning and work environments are designed for neurodiversity — and managers and teachers are trained to be neuroinclusive — neurodivergent individuals achieve far better outcomes,” she noted.

$15M launch grant from the Canopy Neurodiversity Foundation

The Canopy Neurodiversity Foundation awarded a $15 million grant to the UW iSchool to support the launch of the institute.

“The Institute for Neurodiversity and Employment is set up to make a significant difference — not just at the University of Washington, but for communities all over our state,” said Laurie Ackles, executive director of the Canopy Neurodiversity Foundation. “This institute will build on Canopy’s vision for a truly neuroinclusive workforce, dramatically expanding what’s possible in our state.”

Housed in the iSchool, the Institute will integrate faculty, research and support from the Michael G. Foster School of Business and the Paul G. Allen School of Computer Science & Engineering, with additional collaboration from UW Medicine and the School of Social Work.

“The new institute will build upon the outstanding neurodiversity work of Dr. Annabi,” said Anind K. Dey, Dean of the UW Information School. “Adding the deep expertise of our cross-campus collaborators, along with Canopy and other community partners, we will create truly multidisciplinary, innovative and impactful solutions that will transform Washington’s education and employment spaces — including here at the UW.”

“At present, research addressing lifespan issues such as employment is happening in silos across various disciplines, limiting our ability to develop comprehensive solutions,” said Annabi. “By convening a broad coalition of partners across the neurodiversity, employment and academic communities, we can move beyond isolated efforts toward innovative, systems-level change — driven by those with lived experience and deep expertise.”

Annabi's vision for the Institute and the UW

The Institute’s work will focus on five pillars: translational research on neurodiversity and employment, applied professional education and training, community empowerment across Washington state, advocacy efforts to create and strengthen neuroinclusive policies and practices statewide, and direct engagement with UW leadership to make the university a premier destination for neurodivergent faculty, staff, clinicians and students.

Annabi is particularly enthusiastic about the UW’s commitment to ‘walk the talk’ by committing, through the Institute, to neuroinclusive employment practices.

“The UW recognizes that employment is an important component of a person’s quality of life and the equitable distribution of societal resources and power,” said UW Provost Tricia Serio. “As one of the state’s largest employers, we have a vital role to play in modeling ways to increase support for neurodivergent people and break down the persistence of barriers in post-secondary education and the workplace that they face. We are thrilled to channel this work through the Institute for Neurodiversity and Employment.”

The UW Institute for Neurodiversity and Employment will launch activities and programming in 2026.

This article was adapted from the UW News article by Dana Robinson Slote.

2025 Race, Disability and Technology Funded Research

September 22, 2025

In our ongoing efforts to support new avenues in research on race, disability, and technology, CREATE funded three new Race, Disability, and Technology (RDT) grants in 2025.

We are happy to report that this year we received more applications for the RDT grants than in previous years. We also received more applications from outside of CREATE, possibly a sign of our increased outreach to and visibility on campus, and certainly an indication of the importance of this grant mechanism to research efforts across the university.

Tier I project:

Digital Code-Switching and Masking in GAI Use for Multilingual and Multicultural People with Disabilities

CREATE Ph.D. student Aaleyah Lewis, with advisor James Fogarty, received a Tier I grant to explore how multilingual and multicultural people with disabilities engage in code-switching, in masking, and in the intersection of these practices in their daily digital lives.

Tier I project:

Health equity and telemedicine in a rural mining community in Brazil

Jonathan Warren, a UW professor of International Studies, and Dr. Rute Maia, researcher of Social Sciences, Federal University of Rio Grande do Norte and a 2023–24 UW visiting scholar, were also awarded a Tier I RDT grant for a project about health equity and telemedicine in a rural mining community in Brazil.

Tier II project:

Reimagining accessibility technology in special education: A community-based approach to supporting students and families of color

CREATE awarded its first Tier II RDT grant to a team led by Carmen Gonzalez. Working with Seattle Public Schools, Dr. Gonzalez’s team will capture the lived experiences of 20 families who use accessibility technology in special education settings.

The team will talk to families from urban and rural areas, giving families control to guide the conversations and review what they share before it’s added to the digital storytelling archive. The collected stories will help create a professional development program for educators that challenges racism and ableism and better prepares them to support students who use accessibility tools.

Gonzalez is an associate professor in the UW Department of Communication and a co-director of the Center for Communication, Difference, and Equity and the CCDE’s Health Equity Action Lab.

With new NIH funding, CREATE postdoc Bethany Sloane continues research for children with cerebral palsy, motor delays

September 15, 2025

CREATE postdoctoral researcher Bethany Sloane has been working to expand power mobility options for young children with cerebral palsy and other mobility delays. The NIH K23 Mentored Patient-Oriented Research Career Development Award will allow her to pursue her research for the next four years.

Exploration and self-initiated mobility are known to support growth in learning, communication, social skills, and play. Yet, due to limited training, funding, or access to different types of devices, powered mobility devices are often underused in early intervention and pediatric therapy settings.

Bethany Sloane’s research is focused on addressing these issues, to ensure that children under the age of three have opportunities to explore their environments and participate in daily life through mobility. She aims to create training that will enable therapists and caregivers to use Permobil® Explorer Mini mobility devices for in-home therapy.

The grant will also allow Sloane to support therapists and collect data, notably on how consistently the devices are used and any issues that arise. Sloane hopes to broaden the program to include more regions within Washington and Oregon.

Sloane is thankful for support from CREATE and mentorship from CREATE associate director Heather Feldner, who, she said, “helped me understand the grant submission process in general and the NIH grant process in particular.”

The NIH K23 award is a testament of Bethany’s hard work and growth during her postdoc, and I know this work will set her up for continued research success in the future.”

Heather Feldner, Sloane's co-advisor and a CREATE associate director

“It’s been an incredible and rewarding experience to mentor Bethany,” Feldner noted. “It’s rare to be able to work with someone who already has outstanding clinical expertise and a genuine eagerness to learn across disciplines, coupled with a remarkable drive to expand access to technologies that empower children through self-initiated mobility. The NIH K23 award is a testament of Bethany’s hard work and growth during her postdoc, and I know this work will set her up for continued research success in the future.”

An interdisciplinary CREATE collaborator

Sloane has been a practicing physical therapist since 2009. Her doctorate in physical therapy led to practice in pediatrics, and then to a pediatric physical therapy residency at Oregon Health & Science University (OHSU) in 2014. While collaborating with Feldner on a childhood mobility study, Sloane heard about CREATE’s postdoctoral research program, applied, and was awarded a 2024–25 fellowship, with Feldner and Amy Pace, from UW’s Speech and Hearing Sciences program, as co-advisors.

Through work with Go Baby Go Oregon, a non-profit organization that modifies ride-on toy cars, adapts toys, and adds sensory materials to books for children with disabilities – and which she now directs – Sloane was introduced to the unique collaboration between therapists and engineers.

“Never would I have imagined that, as a physical therapist, I’d be building accelerometer trackers and attaching them to children’s toys,”

Bethany Sloane, CREATE postdoctoral researcher

Sloane also collaborates with CREATE associate director Katherine M. Steele and CREATE faculty Kim Ingraham, both UW engineering faculty. She praises – and exemplifies – CREATE’s collaborative environment that has helped translate her vision to real-world discovery.

For one current project, engineering students are helping equip 20 mobility devices with low-cost trackers that will gather data about when and how the mobility devices are used in children’s homes. This work is supported by an Allen School Postdoc Research Award she received earlier this year.

“Never would I have imagined that, as a physical therapist, I’d be building accelerometer trackers and attaching them to children’s toys,” said Sloane.

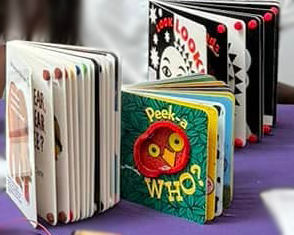

Book adaptation workshop for HuskyADAPT

While a CREATE postdoc, Sloane hosted a HuskyADAPT workshop about book adaptation. For blind and low-vision children encountering printed books, rhinestones and pipe cleaners enhance high-contrast images and provide tactile cues to help children connect related images and words. Additional rhinestones or pompoms on page corners add space between pages, which helps B/LV children turn the pages.

After she demonstrated how to add sensory materials to books, HuskyADAPT members adapted books themselves.

Photo top: Sloane poses with an adapted children’s book during a HuskyADAPT workshop she led. Photo bottom: Adapted books displayed at a HuskyADAPT event.

Learn more

Sloane's NIH K23 funded project is titled “Partnering with Part C Early Intervention to Implement a Powered Mobility Training Program for Young Children with Cerebral Palsy, Gross Motor Function Classification System Level IV–V.”

- Bethany Sloane’s OHSU faculty page

- The NIH K23 Mentored Patient-Oriented Research Career Development Award to support Ph.D.s working toward an independent clinical research program.

- The Allen School Postdoc Research Award to provide postdocs the opportunity to pursue independent research projects carried out by undergraduates.

- Technical paper: Short-Term Powered Mobility Intervention Is Associated With Improvements in Development and Participation for Young Children With Cerebral Palsy: A Randomized Clinical Trial

Blind and Low Vision Teens Join CREATE through YES2 Summer Internships

September 3, 2025

This summer, CREATE hosted three high school/undergraduate interns through a program that focuses on career preparation for young Washingtonians who are blind or visually disabled.

Left to right: YES2 intern Susanna Haley, DUB Research Experience for Undergraduates (REU) student Kaija Frierson, YES2 intern Mohammed Al-jawadi, YES2 intern Kaleb The Washington Department of Services for the Blind (DSB) program, called Youth Employment Solutions 2, provides experience, instruction, and paid 5-week summer internships. The students share a house, have job placements, and learn skills in using public transportation for travel to work sites. When ready, the students commute to their jobs independently.

Statistics indicate that more than 70% of blind adults are either unemployed or under-employed, in jobs unequal to their education. However, evidence also indicates that blind individuals who have work experience prior to entering the labor market are more likely to be successful at defining, achieving, and maintaining their vocational and career goals.

The YES2 interns helped with research projects led by CREATE Director Jennifer Mankoff, Human Centered Design & Engineering professor Julie Kientz, and CREATE and UW DUB postdoctoral researchers.

Mankoff was impressed with the interns’ technical training. “They are incredible — technically adept — and worked on amazing research projects. One project is to make CAD (computer-aided design) accessible to people who are Deaf-Blind; one is focused on a blocks-based physical design platform; and one is focused on accessible diagrams.”

Hear from three people – two interns and a mentor – who participated in the program at CREATE:

Susanna Haley, YES2 intern

Susanna Haley worked on a project to make flowcharts more accessible for individuals with low vision or blindness. Her goal was to extract and convert flowchart information — nodes such as rectangles for processes and diamonds for decisions and the arrows between nodes — into a list format that is more accessible. Susanna reviewed 32 PDF documents and extracted 342 images that looked like flowcharts.

“I began by having AI generate random flowcharts. Then, I developed a program where users could input the number of nodes and edges, as well as the topic of the flowchart,” she explained. “Next, I applied inclusion and exclusion criteria to narrow down the number of flowcharts that met the definition for the project,” she added.

In the process, Susanna gained several new skills, such as working with OpenAI’s APIs and learning more about the Python programming language. She also learned and used Mermaid to create flowcharts, Flask to build web apps, basic generative AI prompt engineering, and how to transfer output into an HTML file. She said she gained a deeper understanding of Monarch, a multi-line braille display device that creates tactile graphics integrated with braille.

Susanna said she enjoyed building a connection to the computer science community and seeing the technology research happening at CREATE and other labs.

“I saw a little better what research at an institution would look like if I decided to pursue that path.” In addition to her many successes in the 5-week internship, she experienced the disappointment of needing to become more exact about which images were actually flowcharts and having to go back and redo some work. “I learned that in research, accuracy matters most,” she noted.

Mohammed Al-jawadi, YES2 intern

Intern Mohammed Al-jawadi worked on an accessible CAD project with CREATE postdoctoral research scientist Carlos Tejada. The project involved creating a library of about sixty 3D models from the Thingiverse website. Al-jawadi collected models such as animals and household items, and then collaborated on building a large language model (LLM) to describe the models.

In the process, Al-jawadi also used the Monarch braille and tactile graphics tablet and found it very useful for a hands-on approach to learning.

“I would say the most valuable thing that I have learned at this internship is how to build and fine-tune an LLM because I am going to college in the fall studying computer science. Learning about LLMs gives me an understanding on how AI models work.”

Kaija Frierson, mentor and DUB undergraduate researcher

Kaija Frierson mentored the YES2 interns, assisting with tasks like building diagrams, running tests, learning the tools used in the lab, and showing them around the space. Frierson, a computer science major at the University of Arkansas, was a DUB undergraduate research intern this summer, advised by Mankoff. While at the UW, she worked on the Accessible Diagram Project, which focuses on finding ways to make computer science diagrams more accessible for experienced educators and learners.

Frierson remarked on the positivity of the interns: “They asked thoughtful questions, picked things up quickly, and were not only hardworking, but also really nice to be around.”

“I really appreciated how the YES2 program gives blind and visually disabled young people the chance to build skills, try things out, and think about their future careers. Being part of it made me realize how valuable programs like this are for preparing students and creating a more inclusive environment,” said Frierson.

DSB partnership with CREATE

The Department of Services for the Blind is a CREATE community partner.

DSB provides career support, independent living services, and youth and family services for Washingtonians who are experiencing vision loss or are Blind, Deaf-Blind, or Low Vision. They also support employers looking to create an inclusive workplace and are always expanding the list of employers who offer summer internships for YES2 students. Contact Janet George for details.

ASSETS 2025: CREATE Papers, Presentations and Demos

August 27, 2025

CREATE faculty, students, and alumni will be well represented at ASSETS 2025 in Denver this fall.

In addition to the papers and experience reports listed below, these CREATE members are part of the ASSETS 2025 Program Committee: CREATE associate director Leah Findlater and several CREATE postdoctoral researchers: Emma McDonnell (also a CREATE Ph.D. graduate), Jazette Johnson, Stacy Hsueh, and postdoc alum Tamanna Motahar.

Awards

CREATE's impact on educating accessibility leaders is reflected by awards to our Ph.D. alumni. Congratulations to all!

- Best Demo: Venkatesh Potluri and Dhruv Jain - Venkatesh Potluri (Ph.D. CSE '24) and Dhruv Jain (Ph.D. CSE '22), are co-authors of Demo of RAVEN: Realtime Accessibility in Virtual ENvironments for Blind and Low-Vision People. Full author list: Xinyun Cao, Kexin Phyllis Ju, Chenglin Li, Venkatesh Potluri, Dhruv Jain. Potluri was advised by CREATE Director Jennifer Mankoff; Jain was advised by CREATE associate director Jon E. Froehlich.

- Best Paper: Megan Hofmann - Megan Hofmann (Ph.D. CSE '22) is a co-author on the paper, “As Someone Who is Disabled, I am so thankful for Sex Work”: Alternative Approaches to Access Among Disabled Sex-Workers." Full list of authors: Jay Rodolitz, Vaughn Hamilton, Madiha Tabassum, Ada Lerner, Megan Hofmann. Hofmann was advised by CREATE director Jennifer Mankoff.

Papers authored by CREATE students, faculty, alumni

A11yShape: “AI-Assisted 3-D Modeling for Blind and Low-Vision Programmers”

Zhuohao (Jerry) Zhang (CREATE Ph.D. student), Haichang Li, Chun Meng Yu, Faraz Faruqi, Junan Xie, Gene S-H Kim, Mingming Fan, Angus G. Forbes, Jacob O. Wobbrock (CREATE associate director), Anhong Guo, Liang He.

“As Someone Who is Disabled, I am so thankful for Sex Work”: Alternative Approaches to Access Among Disabled Sex-Workers

Jay Rodolitz, Vaughn Hamilton, Madiha Tabassum, Ada Lerner, Megan Hofmann (CREATE Ph.D. graduate).

“Before, I Asked My Mom, Now I Ask ChatGPT”: Visual Privacy Management with Generative AI for Blind and Low-Vision People

Tanusree Sharma, Yu-Yun Tseng, Lotus Zhang (CREATE Ph.D. student), Ayae Ide, Kelly Avery Mack (former CREATE postdoc, Ph.D. graduate), Leah Findlater (CREATE associate director), Danna Gurari, Yang Wang.

Beyond Beautiful: Embroidering Legible and Expressive Tactile Graphics

Margaret Ellen Seehorn, Claris Winston (CREATE graduate student), Bo Liu, Gene S-H Kim, Emily White, Nupur Gorkar (HuskyADAPT Communications Chair), Kate S. Glazko (CREATE Ph.D. student), Aashaka Desai (CREATE Ph.D. student), Jerry Cao (CREATE Ph.D. student), Megan Hofmann (CREATE Ph.D. graduate), Jennifer Mankoff (CREATE Director).

CapTune: Adapting Non-Speech Captions With Anchored Generative Models

Jeremy Zhengqi Huang, Caluã de Lacerda Pataca, Liang-Yuan Wu, Dhruv Jain (CREATE Ph.D. graduate).

CARTGPT: Real-Time Correction of CART Captions Using Large Language Models

Liang-Yuan Wu, Andrea Kleiver, Dhruv Jain (CREATE Ph.D. graduate).

Exploring Disability Culture Through Accounts of Disabled Innovators of Accessibility Technology

Aashaka Desai (CREATE Ph.D. student), Jennifer Mankoff (CREATE Director), Richard E. Ladner (CREATE Director for Education Emeritus)

From Screen Reading to Scene Reading in SceneVR

Melanie Jo Kneitmix (CREATE Ph.D. student), Jacob O. Wobbrock (CREATE associate director)

Minor Resistance: The Everyday Politics and Power Dynamics of Assistive Technology Adoption

Stacy Hsueh (CREATE postdoc), Danielle Van Dusen (CREATE Community Partner), Anat Caspi (CREATE associate director), Jennifer Mankoff (CREATE Director).

Modeling Accessibility: Characterizing What We Mean by “Accessible”

Kelly Avery Mack (former CREATE postdoc, Ph.D. graduate), Jesse J. Martinez (CREATE Ph.D. student), Aaleyah Lewis (CREATE Ph.D. student), Jennifer Mankoff (CREATE Director), James Fogarty (CREATE associate director), Leah Findlater (CREATE associate director), Heather D. Evans, Cynthia L Bennett, Emma J. McDonnell (CREATE postdoc and Ph.D. graduate).

Rethinking Productivity with GenAI: A Neurodivergent Students’ Perspective

Hira Jamshed, Mustafa Naseem, Venkatesh Potluri (CREATE Ph.D. graduate), Robin N. Brewer

SoundNarratives: Rich Auditory Scene Descriptions to Support Deaf and Hard of Hearing People

Liang-Yuan Wu, Dhruv Jain (CREATE Ph.D. graduate)

Temp access: Reflecting on multimodal GAI as an accessibility technology for temporary disability

An experience report from CREATE Ph.D. student Kate S. Glazko (CREATE Ph.D. student)

VizXpress: Towards Expressive Visual Content by Blind Creators Through AI Support

Lotus Zhang (CREATE Ph.D. student), Zhuohao (Jerry) Zhang (CREATE Ph.D. student), Gina Clepper (CREATE Ph.D. student), Franklin Mingzhe Li, Patrick Carrington, Jacob O. Wobbrock (CREATE associate director), Leah Findlater (CREATE associate director).

Where Can I Park? Understanding Human Perspectives and Scalably Detecting Disability Parking from Aerial Imagery

Jared Hwang (CREATE Ph.D. student), Chu Li (CREATE Ph.D. student), Hanbyul Kang, Maryam Hosseini, Jon E. Froehlich (CREATE associate director).

Posters by CREATE students, faculty, alumni

The Accessibility, Security, and Privacy Nexus: Trends and Opportunities

Kelly Avery Mack (CREATE Ph.D. graduate), Yu-Jie Chen, Lotus Zhang (CREATE Ph.D. student), Danna Gurari, Tanusree Sharma, Yang Wang, Leah Findlater, CREATE associate director)

Accessibility Heuristics for Vibe Coding Interfaces

Shalini Madan, Sreelakshmi Surabiyil Bindu, Venkatesh Potluri (CREATE Ph.D. graduate)

DIY in Action: Understanding Do-It-Yourself Practices from Tangible Symbol Cards

Alexander S.W. Parent, L. Beth Brady, Sarah Ivy, Jennifer Hercman, Amy Hurst (CREATE Advisory Board member)

Re-framing Accessibility from Constraint to Creative Catalyst

Sarah Andrew, Anisa Callis, Anne Spencer Ross (CREATE Ph.D. graduate), Garreth W. Tigwell

Towards a Group Recommender System for Team Formation Foregrounding Students with Social Anxiety

Annuska Zolyomi (CREATE faculty member), Danny Nguyen

Demos by CREATE students, faculty, alumni

Demo of CapTune: Adapting Non-Speech Captions with Anchored Generative Models

Jeremy Zhengqi Huang, Caluã de Lacerda Pataca, Liang-Yuan Wu, Dhruv Jain (CREATE Ph.D. graduate)

EvolveCaptions: Real-Time Collaborative ASR Adaptation for DHH Speakers

Liang-Yuan Wu, Dhruv Jain (CREATE Ph.D. graduate)

GeoQA3 : Towards An Accessible AI-based Question-Answering System for Geoanalytics

Chu Li (CREATE Ph.D. student), Rock Yuren Pang (CREATE Ph.D. student), Arnavi Chheda-Kothary (CREATE Ph.D. student), Ather Sharif (CREATE Ph.D. graduate), Henok Assalif, Jeffrey Heer, Jon E. Froehlich (CREATE associate director)

Making Street View Accessible Using Context-Aware, Multimodal AI: A Demo of StreetViewAI

Jon E. Froehlich, Alexander J. Fiannaca, Nimer M Jaber, Victor Tsaran, Shaun K. Ka

RAVEN: Realtime Accessibility in Virtual ENvironments for Blind and Low-Vision People

Xinyun Cao, Kexin Phyllis Ju, Chenglin Li, Venkatesh Potluri (CREATE Ph.D. graduate), Dhruv Jain (CREATE Ph.D. graduate)

Next Steps: People of CREATE Updates

June 11, 2025

Congratulations to students and postdocs on a productive academic year! Join us in celebrating these career steps.

Graduation

Congratulations to CREATE member Anant Mittal, a newly minted Ph.D., for completing his program and successfully defending his thesis! During his program, Mittal, advised by James Fogarty, worked on the design and development of Jod, a videoconferencing platform to facilitate communication in mixed hearing groups. Mittal’s final thesis focused on designing, implementing, and examining systems for communication, collaboration, and coordination in complex settings, such as interactions among people with and without disabilities and patients with chronic conditions collaborating with providers for care.

Postdocs on the move

Congratulations to these postdoctoral researchers as they embark on the next steps in their careers!

Dr. Tamanna Motahar has been appointed to the position of Patrick Clark Endowed Assistant Professor in the School of Computing and Informatics at the University of Louisiana at Lafayette. She expresses deep gratitude to her fellow CREATE postdocs and her mentors, Maya Cakmak, Heather Feldner, and Jennifer Mankoff, for their guidance, mentorship, and support during her postdoctoral fellowship and during the job search.

Dr. Kelly Avery Mack, a former CREATE Ph.D. student, will continue with their accessibility research as an Apple AI/ML Resident this summer. Mack told CREATE, “I appreciate the connections with community partners that CREATE fosters and encourages me to be a part of.”

Dr. Anthony Osuna is moving on to the position of Acting Assistant Professor in the UW Department of Pediatrics. He’ll be based at Seattle Children’s Research Institute in the Center for Child Health, Behavior, and Development, where he will launch a research lab focused on digital health equity for people with autism and intellectual and developmental disabilities (IDD). His work will center on developing and evaluating internet safety and digital literacy interventions, and exploring how generative AI and assistive technologies can be used to promote inclusion and health equity for people with IDD.

We equally celebrate that Stacy Hsueh, Jazette Johnson, and Bethany M. Sloane will continue as postdoctoral researchers with CREATE. Emma J. McDonnell will also continue as a participating CREATE postdoc, with a primary appointment in UW Medicine as a National Library of Medicine Postdoctoral Fellow in Biomedical Informatics and Medical Education.

Community Day 2025 Recap

June 5, 2025

On May 28, CREATE student and faculty researchers gathered with industry and community partners to discuss concerns about and approaches to sustainable accessibility research. Two hybrid panel discussions covered timely topics, followed by research presentations from CREATE students and the CREATE-HuskyADAPT Research Showcase.

Thank you to all who attended Community Day and to our community partners and panel members for lively, informative conversations!

Learn about this year's Community Day and Research Showcase events and subscribe to the CREATE mailing list for updates on accessibility research.

2025 panel discussions

This year, our panel discussions focused on current legal challenges to access and rights and explore how accessible technology research makes it from the lab to the real world. The panels were followed by our annual Research Showcase, co-sponsored by HuskyADAPT, an energetic and enthusiastic sharing of the outstanding work being done in the field of accessible technology here at the UW.

Panel 1: Technology, Access, and the Law

With CREATE Director for Education Mark Harniss moderating, speakers Jonathan Lazar and Lee Tremblay started with the positives of federal rules and laws already in force and difficult to officially roll back.

Jonathan Lazar is a professor in the College of Information at the University of Maryland and is the executive director of the Maryland Initiative for Digital Accessibility and a faculty member in the Human-Computer Interaction Lab. Lazar focuses on technology accessibility for people with disabilities, user-centered design methods, assistive technologies, and law and public policy related to HCI. He has been a CREATE Advisory Board member since 2020.

Lee Tremblay will join the Center for Reproductive Rights in July as a Litigation Fellow. Lee was a Justice Catalyst Fellow at Legal Voice, a Pacific Northwest organization fighting for gender liberation, working at the intersection of disability and reproductive justice in Idaho. Lee has a J.D. from Georgetown University Law Center, where they were President of the Disability Law Student Association and published in the Georgetown Law Technology Review.

While it's unclear whether federal rules will be enforced, at the state and local levels, there is hope for sustained research and protections. The speakers noted ample opportunity to get involved and make a difference, including running for library and school boards and in local, regional, and state offices.

These publications were recommended by Lazar:

- The potential role of US consumer protection laws in improving digital accessibility for people with disabilities. Lazar, J. (2019). U. Pa. JL & Soc. Change, 22, 185.

- The Disability Tax and the Accessibility Tax: The Extra Intellectual, Emotional, and Technological Labor and Financial Expenditures Required of Disabled People in a World Gone Wrong… and Mostly Online. Olsen, S. H., Cork, S., Anders, P., Padrón, R., Peterson, A., Strausser, A., & Jaeger, P. T. (2022). Including Disability, 1, 51-86.

Panel 2: Translation: Bringing Research into the World

Moderator Dr. Mary Goldberg opened the conversation with a question for panelists on what motivated them to get involved in research focused on people with disabilities. It was striking how influential personal experiences and core values were the primary factors mentioned.

Our panelists were chosen based on their expertise and success in translating accessible research; they shared their websites for helpful resources and information:

Kirk Adams, Managing Director at Innovative Impact: "We strive to accelerate the inclusion of people with disabilities in the workforce." Adams also leads the Apex Program, which works to launch blind people in national security.

Michael Bervell, founder and CEO at TestParty: "TestParty automatically scans source code to create more accessible websites, mobile apps, images, and PDFs - all while reducing risk and supplementing in-house or manual vendor audits."

Mary Goldberg, Co-Director at IMPACT Center: "Assistive technology ideas, research, and development can take on different forms, and encompass commercial products, clinical standards and guidelines as well as freeware. So wherever you are in the process, the IMPACT Center can assist you on your journey."

Wilson Ng is a professor of entrepreneurship and Director of the Chair in Entrepreneurship & Disability at IDRAC Business School in Lyon, France. Ng is also an investment advisor at Crowd for Angels, "a UK crowdfunding platform that offers companies and investors opportunities to raise and invest funds in different projects. Our crowdfunding opportunities include shares (equity) and crowd bonds (debt)."

Michele Williams is the owner and Accessibility Consultant of M.A.W. Consulting: "We are on a mission to ensure that every facet of your organization not only meets accessibility compliance standards, but also champions diversity and inclusivity at its core. Think of us as your one-stop-shop for accessibility consulting."

Questions?

Contact us at: kqvoss@cs.washington.edu

GAAD Day 2025 Interview with CREATE Director Jennifer Mankoff

In honor of Global Accessibility Awareness Day (GAAD) on May 15, Melissa Albin from UW-IT Communications sat down with CREATE Director Dr. Jennifer Mankoff, to discuss the intersection of computing, accessibility, and disability studies. She shared personal reflections, insights on culture change, and her hopes for a more inclusive future in tech and beyond.

Dr. Mankoff, a professor in the Information School and the Paul G. Allen School of Computer Science & Engineering, will speak at the UW’s GAAD mid-day program on Thursday, May 15.

- What initially drew you to the intersection of computing, accessibility, and disability studies?

- I was a computer scientist first—and then I became disabled. That personal shift made me start thinking about how technology could better meet my needs. My first faculty position was at UC Berkeley, which was at the heart of the movement to provide people with disabilities with access to higher education and the birthplace of the independent living movement. They already had a disability studies department when I started there in 2001. Being there, I met so many people who introduced me to disability studies and the principles of the disability rights movement. It really spoke to me and shaped how I think about accessibility work. Over time, I’ve expanded that view to include the importance of disability justice as well.

- Given that context, is it frustrating to see disability rights as they are threatened or regressing in some ways?

- The disability community has always been incredibly effective in establishing groups that understand advocacy, that do policy work, and that do the groundwork to support disabled people. They’re ready to stand up for the continued rights of people with disabilities. While there may be threats, there’s also a large group of people engaged in pushing back.

- How has mentorship played a role in your accessibility work?

- For much of my career, I didn’t have disabled mentors in technology or STEM fields. I was often one of the only senior faculty members who was out about being disabled. One exception: I did have the privilege of being mentored by Devva Kasnitz, who was a remarkable leader in the field before she passed away recently. Also, I had non-disabled mentors who supported me. Today, it’s a real privilege to mentor each new generation of disabled students and faculty, many of whom are truly changing the world.

- There seems to be stronger mentorship happening now, especially at UW. Could universities be doing more in this space?

- Absolutely. Higher education still has a long way to go in how it supports disabled undergrads, grad students, faculty, and staff. UW is doing good work—particularly through programs like AccessComputing and DO-IT—but I don’t know of a university that doesn’t still have room to improve.

- Support needs to go beyond the university, too. Conferences, publishers, research environments—they all need better accessibility practices. The change requires advocacy at every level, and collaboration between people who understand these needs and can educate others.

- How can staff at UW better support professors and students when it comes to accessibility?

- It starts with a cultural shift—expecting that materials and platforms are “born accessible” from the start. That means documents, websites, tools—everything—should be accessible the moment they go live. This aligns with what the new DOJ rule and our own Digital Accessibility Initiative are encouraging.

- Once that’s the norm, it becomes natural to teach accessibility in any class where people create content. We’ll graduate students who expect and understand accessibility, and we’ll hire people trained to value it. Until then, we need to keep pointing out opportunities for improvement and keep working together.

- That makes so much sense—it’s like cybersecurity in that it becomes easier when it’s integrated from the beginning.

- Exactly. And it’s not just about digital tools. It’s also about how we treat each other. For instance, if someone needs to work remotely, that is an accommodation that allows excellence and commitment to being a successful part of the team. It’s not about trying to “get out of work.”

- We need to shift our mindset to see accommodations not as exceptions, but as part of building better teams and communities. That that kind of attitude shift is as much a part of the culture change that we need as the focus on the way we produce documents and digital content.

- What about long-term support? How do we build sustainable systems for accessibility at UW?

- One thing Devva taught me is that accessibility isn’t just about the person receiving support—it’s about all of us. If someone uses ASL and I don’t understand it, the interpreter is there for me, not for them. I’m the one who needs the translation.

- If we all saw accessibility as a shared responsibility, we’d make more progress. When we stop forcing square pegs into round holes, we make space for everyone to contribute in ways that work for them. That’s where we want to end up.

- That’s such a powerful perspective. Is there anything you wish people would ask you more often about accessibility?

- I wish more people asked disabled people what they actually want. We need to focus on increasing autonomy, agency, and creativity. We need to really consider that access work is not just here to fill a gap. Too much work is based on a deficit model.

- It’s important to recognize that being disabled is a joyful experience of community as much as it is anything else. We’re not just here to be “accommodated”—we’re here to contribute and innovate. Tools should reflect that. If we build tools that only fill gaps in a constrained space, we’re not really providing support for each other.

- And finally, we need to recognize that many barriers are structural. Don’t assume that technology alone can address every issue; technology needs to be part of a broader system of support. Maybe you need to go in and actually change how technology is disseminated or what information is available in order to solve the problem and not just build a tool.

- Are you hopeful about how emerging technologies like AI might help or hinder accessibility?

- On the one hand, people with disabilities are already using AI in powerful, creative ways—often to solve problems no one had tried to address before. But AI also reflects the biases of the people and data behind it. For instance, automated captions might fail multilingual speakers. Resume screeners may down-rank applicants who mention disability—even if they have prestigious qualifications. And these harms often happen without the affected person even knowing. So yes, AI has potential, but we must remain critical and intentional about how it’s used.

- If there’s one thing you want the community to know this Global Accessibility Awareness Day, what would it be?

- As a technologist, I’ll say this: people with disabilities are everywhere. We use all the technology out there. Don’t just think about the technology for people with disabilities as being the stuff that’s solving access problems; think about it as being all the technology, and make all of it accessible. Accessibility shouldn’t just be about “assistive tools”—it should be baked into everything. Whether it’s a creative design tool or a grading system, assume disabled people are going to use it—because we are.

- Building technology this way doesn’t just make things better for people with disabilities; it makes things better for everyone.

This article was reproduced from the interview by Melissa Albin for the Accessibility at the UW website.

James Fogarty inducted into SIGCHI Academy

May 13, 2025

CREATE associate director James Fogarty has been inducted into the SIGCHI Academy Class of 2025. Each class of the the ACM Special Interest Group on Computer-Human Interaction represents the principal leaders of the field, whose research has helped shape how we think of HCI.

A central figure in Seattle’s human-computer interaction (HCI) community and beyond, Fogarty has made key contributions in accessibility, sensor-based interactions, interactive machine learning, and personal health informatics. He has played a pivotal role in founding and growing Design, Use, Build (DUB) — the UW’s cross-campus HCI alliance bringing together faculty, students, researchers and industry partners.

“I am honored to be among the SIGCHI Academy Class of 2025,” Fogarty said. “I’m grateful for the amazing students and collaborators that I’ve had the pleasure to work with over the years, advancing HCI, interactive machine learning, personal health informatics and accessibility research.”

James Fogarty, CREATE associate director and professor in the Allen School of Computer Science & Engineering

Important strides in accessibility research

Fogarty was co-advisor on research, led by Anne Spencer Ross, that drew inspiration from work in epidemiology to conduct the first large-scale assessment of accessibility in 10,000 Android apps. The Department of Justice cited the work as part of its updates to the Americans with Disabilities Act.

Fogarty also extended his work on interface understanding and enhancement to demonstrate real-time repair of mobile app accessibility failures. This research helped directly motivate and inform Apple’s launch of accessibility repair in its pixel-based Screen Recognition.

Early AI investigation: 'ahead of its time'

Upon joining the UW's Allen School of Computer Science & Engineering in 2006, Fogarty launched a new research emphasis in interaction with artificial intelligence (AI) and machine learning. Fogarty’s research into new methods for engaging end-users in machine learning training and assessment and understanding difficulties that machine learning developers encounter was considered ahead of its time. The research contributed to what is now known as human-AI interaction before it became a trending topic, and has directly impacted industry guidelines for the field.

“James has made an exemplary impact across research disciplines and industry,” Allen School professor Jeffrey Heer said. “His research prowess, volunteer spirit, deep care, thoughtfulness and community-mindedness have helped guide DUB and advance the HCI community in Seattle and across the globe.”

Fogarty and his collaborators developed Prefab, a system for real-time interpretation and enhancement of graphical interfaces through reverse engineering their pixel-level appearance. Prefab, which earned a 2010 CHI Best Paper Award, was a breakthrough in interface systems research, foreshadowing current work using AI to understand, interact with and enhance graphical interfaces.

This article was excerpted from an Allen School article by Kristine White. Read the full article.

Read more about CREATE contributions to CHI 2025, the 2025 ACM SIGCHI Awards, and DUB and the UW presence at CHI 2025.

GazePointAR makes sense of spoken questions through eye gaze, gestures and past conversations

May 31, 2024

“What’s over there?” “How do I solve this math problem?”

If you try asking a voice assistant (VA) like Siri or Alexa such questions you won’t get much information. While VAs are transforming human-computer interaction, they can’t see what you’re looking at or where you’re pointing. CREATE Ph.D. student Jaewook Lee has led an evaluation of GazePointAR, a fully-functional, context-aware VA for wearable augmented reality (AR) that uses eye gaze, pointing gestures, and conversation history to make sense of spoken questions.

An example interaction with GazePointAR. The user’s query “What is this?” is automatically resolved by using

real-time gaze tracking, pointing gesture recognition, and computer vision to replace “this” with “packaged item with text

that says orion pocachip original,” which is then sent to a large language model for processing and the response read by a

text-to-speech engine.Lee, along with advisor Jon E. Froehlich and fellow researchers, evaluated GazePointAR by comparing it to two commercial systems. The team also studied GazePointAR’s pronoun handling across three assigned tasks and its responses to participants’ own questions. In short, participants appreciated the naturalness and human-like nature of pronoun-driven queries, although sometimes pronoun use was counter-intuitive.

Lee presented the team’s paper, GazePointAR: A Context-Aware Multimodal Voice Assistant for Pronoun Disambiguation in Wearable Augmented Reality, at CHI ‘24, sharing a first-person diary study illustrating how GazePointAR performs in the wild. The paper, whose authors also include Jun Wang, Elizabeth Brown, Liam Chu, and Sebastian S. Rodriguez, enumerate limitations and design considerations for future context-aware VAs.

Taskar project helps pedestrians find accessible routes all over Washington state

April 9, 2025

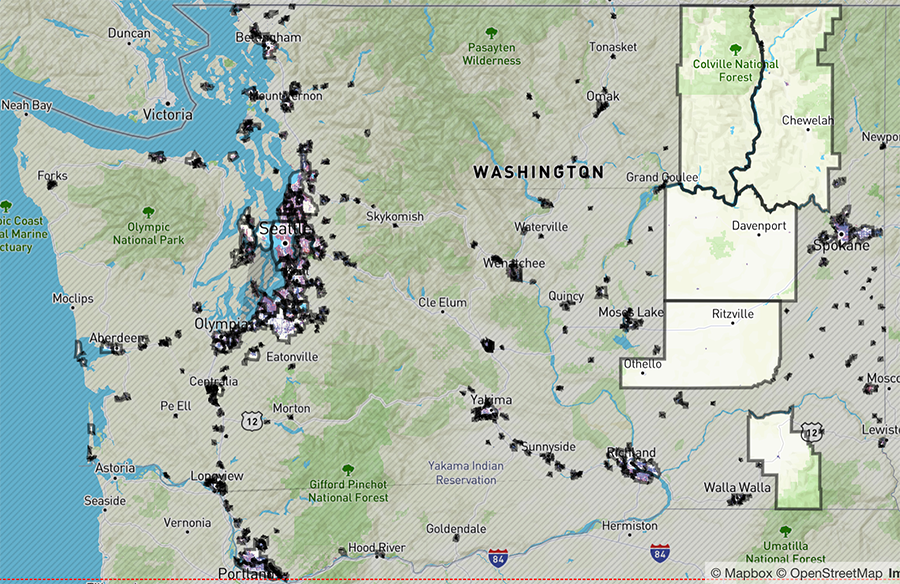

AccessMap is an app and a collection of data sets that let pedestrians tailor routes for their accessibility needs and preferences. Coverage has been spreading across Washington state and now includes a new data set, called OS-CONNECT, for sidewalks and other paths statewide, from Forks on the Olympic Peninsula to Clarkston in the southeast.

AccessMap was created in the Taskar Center for Accessible Technology (TCAT), which is led by CREATE associate director Anat Caspi. When it was launched in 2017, data was limited to parts of Seattle. Over the years, it has expanded to other cities near the Salish Sea, including Everett, Mount Vernon and Bellingham.

“Not only are we including all sidewalks in Washington, which is huge, but we are engaging communities and planners in a massive effort to support data production and the maintenance of this resource long term, to make it sustainable and translatable to other institutions. This way states across the U.S. could start using it.”

Anat Caspi, Director, Taskar Center and a CREATE associate director

The Washington State Legislature (in House Bill 1125) assigned TCAT to build the OS-CONNECT data set, which the team completed well ahead of its projected 2027 goal. The team will now perform deep quality checks, work with the different communities to analyze and interpret what the data means to them, and engage citizens in actions that promote public participation in data and active transportation.

Interactive map of Washington state. Mapped cities and towns are outlined in grey.

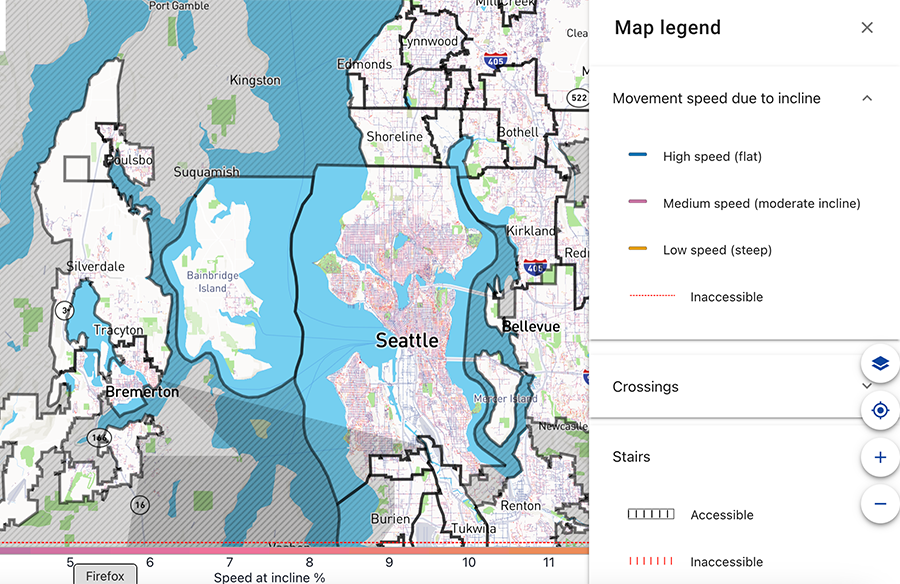

The same map, zoomed in to the Olympic Peninsula, east to Bellevue. The legend is open to show meaning of different route colors.

The Taskar Center launched OS-CONNECT

at its annual OpenThePaths conference in March. The conference brings together community members, advocates, planners, researchers and policymakers dedicated to expanding and sustaining pedestrian-friendly infrastructure.

“No state has before used machine learning and human vetting to collect, in a consistent, standardized way, all of the pedestrian infrastructure in that state,” said Caspi, a research principal in the Paul G. Allen School of Computer Science & Engineering, where TCAT is housed. “OS-CONNECT helps us answer the original question the state asked: ‘Who has access to frequent transit?’ And now we can answer many other questions, such as: ‘What type of access do people with diverse needs have to important services like grocery stores, schools and health care?’”

The state compiled OS-CONNECT using TCAT’s OpenSidewalks model, which combines machine learning with human vetting to catalogue pedestrian infrastructure. For instance, using the data set through the AccessMap app, a person using a wheelchair can plan a route only on streets that have sidewalks, don’t have an incline of greater than 5% and have curb ramps for any intersections.

The data can help local governments identify where sidewalks are in poor condition or missing. OS-CONNECT supports Walkshed, an accessibility app for urban planners, and projects such as Complete Streets, a model for equitable infrastructure design, and Vision Zero, a Seattle project to end traffic deaths and serious injuries by 2030.

This article was excerpted from the UW News article by Stefan Milne. For more information, contact Caspi at uwtcat@uw.edu.

Students: Apply for HuskyADAPT Leadership Positions

April 7, 2025

CREATE students are encouraged to join the HuskyADAPT leadership team through a variety of student chair positions. Applications are being accepted through Friday, April 18.

Being on the leadership team involves approximately 5 hours per week of commitment, though this may vary based on your role and upcoming events. The HuskyADAPT chairs are the student leaders who plan and facilitate all HuskyADAPT events, as demonstrated at the Spring Community Meeting.

Current open positions

HuskyADAPT student executive chairsCoordinates Student Exec Board meetings, serves as the main HuskyADAPT contact, communicates with faculty advisors, organizes finances and spearheads funding applications, and mentors/supports other officers. Useful skills/experiences include leadership (especially previous leadership with HuskyADAPT), communication, organization, and event management.

Manages HuskyADAPT budgets, approving purchases, applying for new funding opportunities, organizing our yearly giving campaign, and compiling our annual report.

Lead on-campus and off-campus toy adaptation efforts. Essential skills include the ability to plan and facilitate events (2 events per month, 20 to 50 people per event), expertise in toy adaptation, strong organizational skills, and patience. Helpful experiences include regular attendance at toy adaptation events. Toy adaptation chairs work closely together.

Lead our Yellow Toy Club and help to foster a toy-adaptation community! Plan weekly Yellow Toy Club meetings, manage the club space, and lead twice a quarter Yellow Toy Fix It Events. Essential skills include expertise in toy adaptation and organization skills. Helpful experiences include regular attendance at toy adaptation events, Lead Toy Adapter certification, and has participated in Yellow Toy Club.

Lead toy donation and distribution (including leading collaborations with toy library organizations such as the PNW Adapted Toy Library and a growing collaboration with King County Library System. Essential skills include expertise in toy adaptation and strong communication and organizational skills. Helpful experiences include regular attendance at toy adaptation events.

Integrate the UW GoBabyGo Leadership team with HuskyADAPT. This may include coordinating volunteers and necessary logistics for GoBabyGo workshops, assisting with marketing development for the GoBabyGo program and workshops, supporting the GoBabyGo design team, and general involvement with the GoBabyGo Leadership team. Helpful experiences/skills include knowledge of the national GoBabyGo organization, ability to plan and lead events, and passion for inclusion and importance of early mobility for children with disabilities. Previous toy or car adaptation experience or electrical/wiring experience a plus! This position will also require additional planning meetings with the Co-directors of UW GoBabyGo from Rehab Medicine, Shawn Rundell and Heather Feldner.

Lead student design projects that tackle accessibility challenges in our community. This includes determining suitable design projects, creating design teams, and mentoring and teaching technical skills to design teams. Essential skills include design knowledge, human-centered design experience, and organizational skills. Recommended prior experience in a human-centered design or HuskyADAPT Design Team.

Lead communications! Manage and update the HuskyADAPT website and social media pages (Twitter, Instagram), create flyers about events occurring throughout the year, and send the bi-weekly emails. Helpful skills include graphic design, social media skills, organization and communication skills.

Lead outreach through partnerships with K-12 students, industry partners, and clinical partners. Note that this includes all toy adaptation and design events held off campus as well as on-campus K-12 outreach such as tours and Discovery Days. Help connect us with other organizations on campus, such as CREATE and the D-Center. Attend monthly Engineering Student Council meetings and CREATE events. Lead special events, like our twice-a-year Design-a-thon and Design Showcases. Essential skills include ability to plan and facilitate events, expertise in toy adaptation, and strong organizational and communication skills. Helpful experiences include enjoying teaching and working with children.

Don't miss this opportunity to get more involved in CREATE and help HuskyADAPT continue its impactful work!

Why get involved?

HuskyADAPT is a student organization, supported by CREATE, that collaborates with individuals with disabilities and community partners on design projects. They provide adapted toys and devices for free to the local community, create low-tech adaptive technologies such as adapted books and low-cost switches, and offer education on accessible design on campus and in the community.

Skill Development: Gain hands-on experience in accessible design, project management, mentoring, and community outreach.

Networking: Connect with professionals, community partners, and fellow students passionate about accessibility.

Leadership Experience: Enhance your leadership skills by taking on roles such as toy donation chair, student executive chair, or outreach chair, which are well-suited to graduate student schedules and responsibilities.

As a current CREATE Ph.D. student and long-time HuskyADAPT leader, I appreciate the opportunity to be involved in the local disability community AND teach others about accessibility. As someone who does research with a lot of local families and young children, I always share HuskyADAPT as a resource to them as well.

Mia Hoffman

New Faculty Join CREATE in 2025

April 2, 2025

We are excited to work with two new faculty members who share CREATE's goal of advancing accessibility through technology while ensuring disabled people are full participants in shaping technological solutions.

Cecilia Aragon, Professor, Human Centered Design & Engineering

As a faculty member with a disability, combined with technical expertise and commitment to inclusive design, DR. Cecilia Aragon shares CREATE's vision of a world where people with disabilities are full participants in shaping tomorrow's world.

Aragon is a professor in Human Centered Design & Engineering and an adjunct professor in three programs: Computer Science & Engineering, Electrical and Computer Engineering, and the Information School. She is also a Senior Data Science Fellow in the eScience Institute and a Co-PI of AccessADVANCE, a program within UW DO-IT that works to improve the systemic status of women faculty with disabilities.

Yohali Burrola-Mendez, Research Scientist, UW Family Medicine

Dr. Yohali Burrola-Mendez is a research scientist at UW Family Medicine. Her research spans rehabilitation, disability studies, assistive technology, wheelchair service delivery, health equity, adult training, and the development of assessment tools.

Burrola-Mendez has worked in low- and middle-income countries, where she has co-led projects funded by USAID, WHO, UNICEF Mexico, and various non-governmental organizations. Burrola-Mendez holds a Ph.D. in Rehabilitation Sciences and an Master’s of Science with a concentration in Musculoskeletal Diseases. Additionally, she earned a Certificate in Global Health Practice from the Johns Hopkins Bloomberg School of Public Health and completed postdoctoral training at the CHU Sainte-Justine Research Center, the largest mother-and-child health facility in Canada, affiliated with the University of Montreal.

"Dude, what am I gonna do with a touchscreen? It's crazy. I can't feel anything."

March 26, 2025